Qualitative Study Insights

Understanding Your Qualitative Results

Once your sessions are complete, all your recordings, transcripts, highlights, themes, and analytics are available in the Results tab. This article explains what each section shows and how to use it to move from raw session data to clear findings.

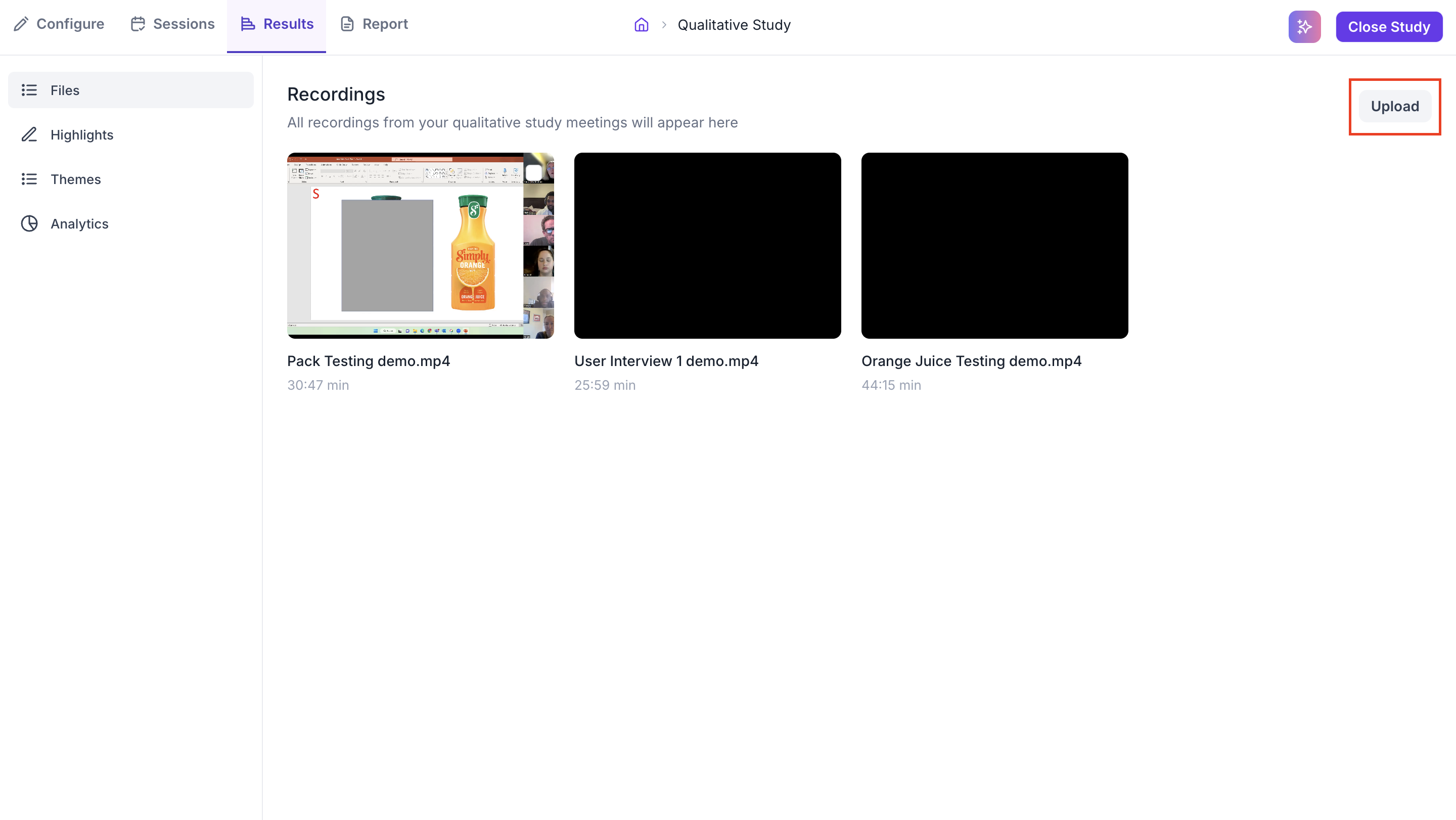

FILES

The Files section shows all recordings associated with your study as thumbnails. Each thumbnail displays the file name and duration. Sessions conducted live in Decode appear here automatically once the session ends. If a session was conducted on an external platform such as Zoom or Google Meet, you can bring that recording into Decode by clicking Upload in the top right.

Supported upload formats: .wav, .mp3, .mp4, .m4v, .mov, .doc, .docx, .ppt, .pptx, and .pdf. File size must be between 10 KB and 5 GB with a minimum duration of 5 seconds.

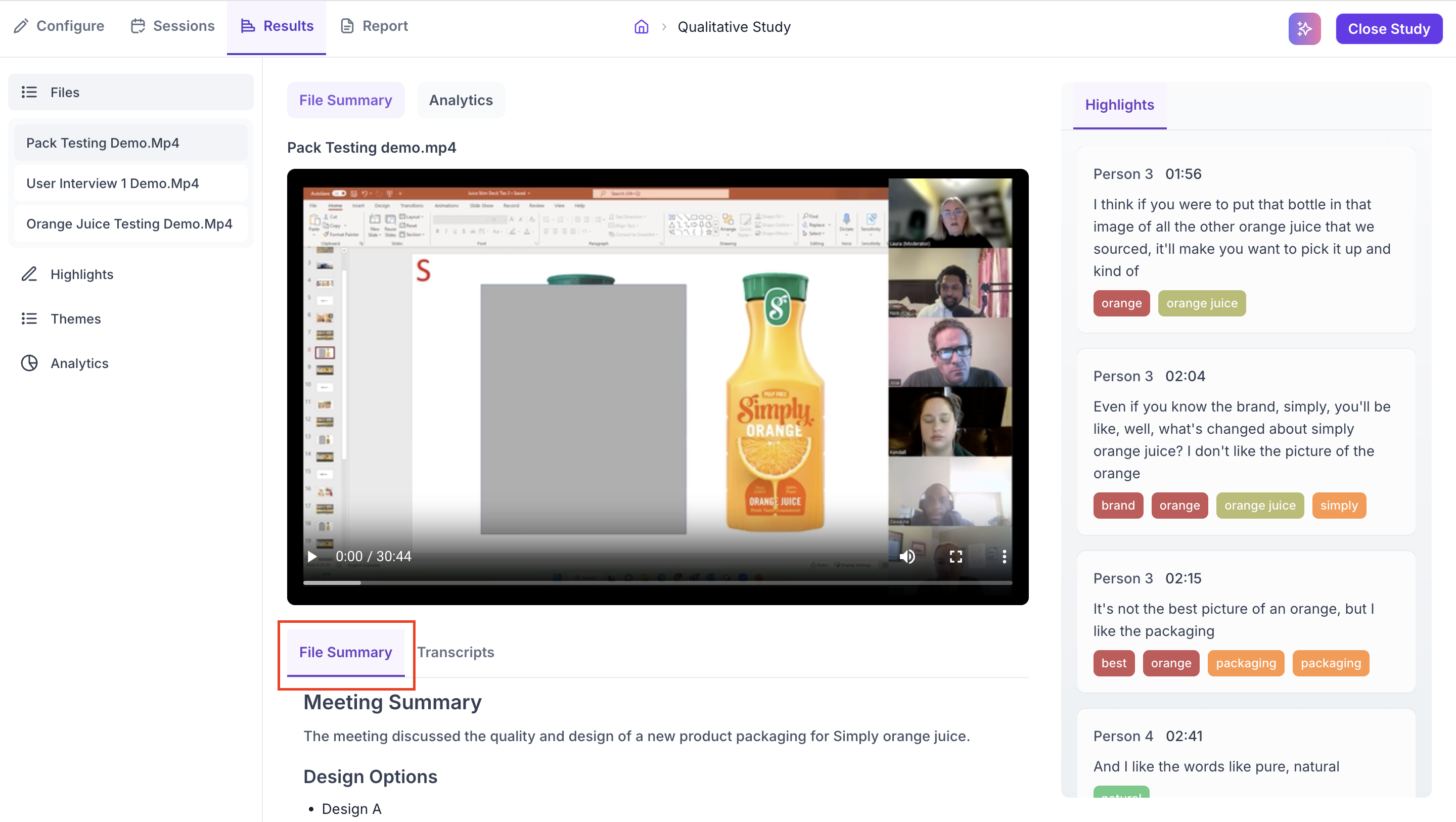

Click any recording to open it. Inside each recording there are two tabs at the top: File Summary and Analytics.

File Summary Section

The File Summary section contains the video player and two sub-tabs: File Summary and Transcripts.

File Summary

The File Summary sub-tab gives you a quick AI-generated overview of what happened in the session. It includes a Meeting Summary that describes the main topics discussed, followed by structured notes covering key points, decisions, or discussion areas from the session. This is useful for getting a fast understanding of a session before going into the full transcript, especially when reviewing recordings you did not moderate yourself.

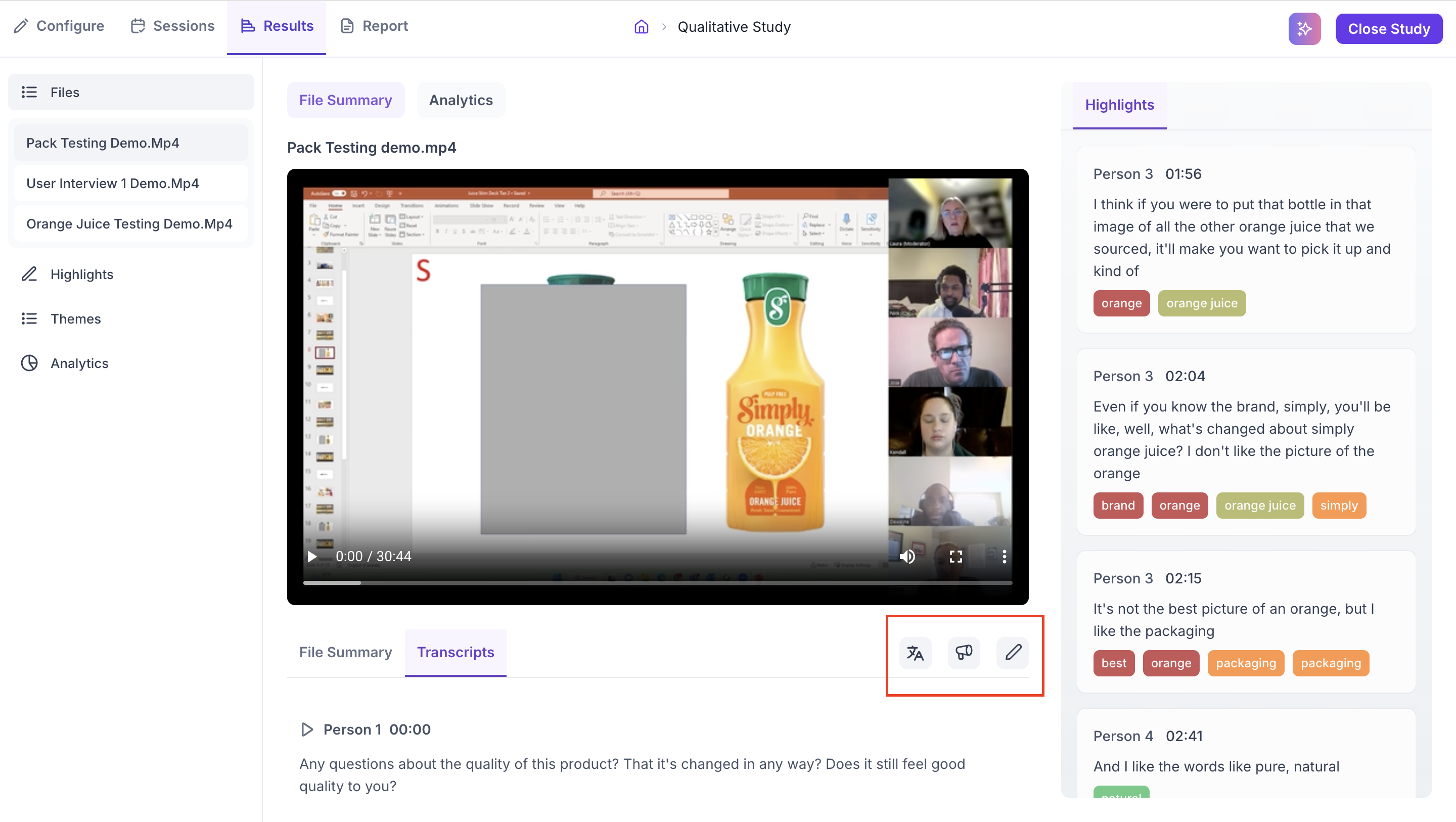

Transcripts

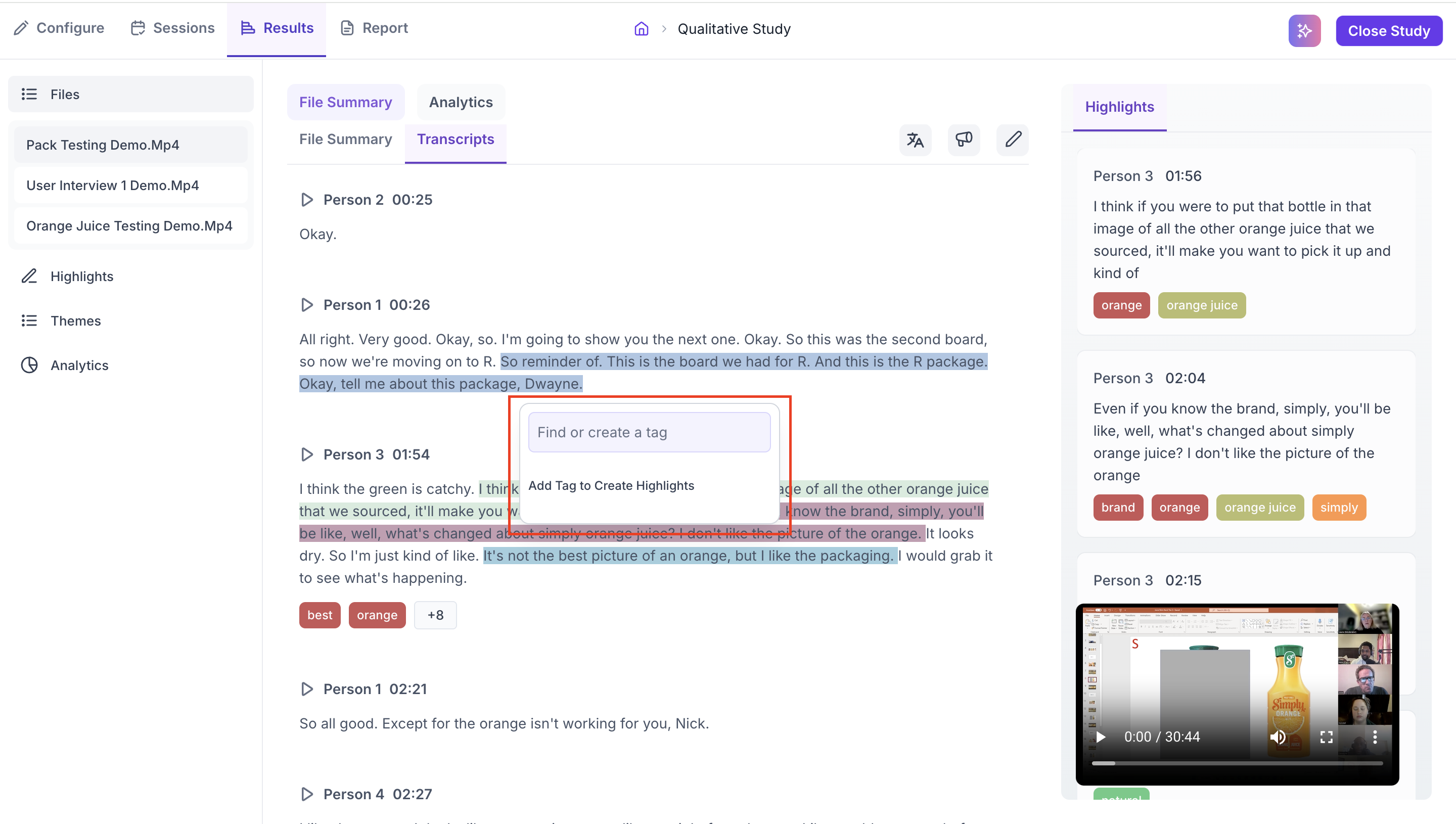

The Transcripts sub-tab shows the full, timestamped record of everything said during the session. Each block of speech is attributed to a speaker with a play button that jumps to that exact moment in the video. The right panel shows all Highlights created for this file so far.

Three icons in the toolbar give you additional options.

- Translate: Translates the transcript into any of 100+ languages. Use this when participants spoke in a different language from your working language, or when you need to share the transcript with a team that works in a different language.

- Speaker Label: Opens the Speakers List panel. Decode automatically detects how many distinct speakers are present and lists each one with a sample audio clip so you can identify who is who. Assign each speaker a role (Moderator or Participant) and a display name. Speaker labels are used in the Analytics tab to calculate speaking time per person and attribute emotional signals correctly. Complete this step before reviewing Analytics to ensure the data is accurate.

- Edit: Allows you to correct any errors in the auto-generated transcript. Use this when words have been misheard or incorrectly transcribed in a way that affects the meaning of what was said.

To create a highlight, select any text in the transcript. A prompt appears where you can search for an existing tag or create a new one. Once a tag is applied, that moment becomes a Highlight linked to the video at that timestamp. The Highlight also appears in the right panel and in the Highlights section of the left navigation. Tags feed into the Themes section where Decode shows the emotion breakdown per topic across all sessions.

Analytics Tab

The Analytics tab shows quantitative data derived from the individual session recording. It includes the file details and five data sections.

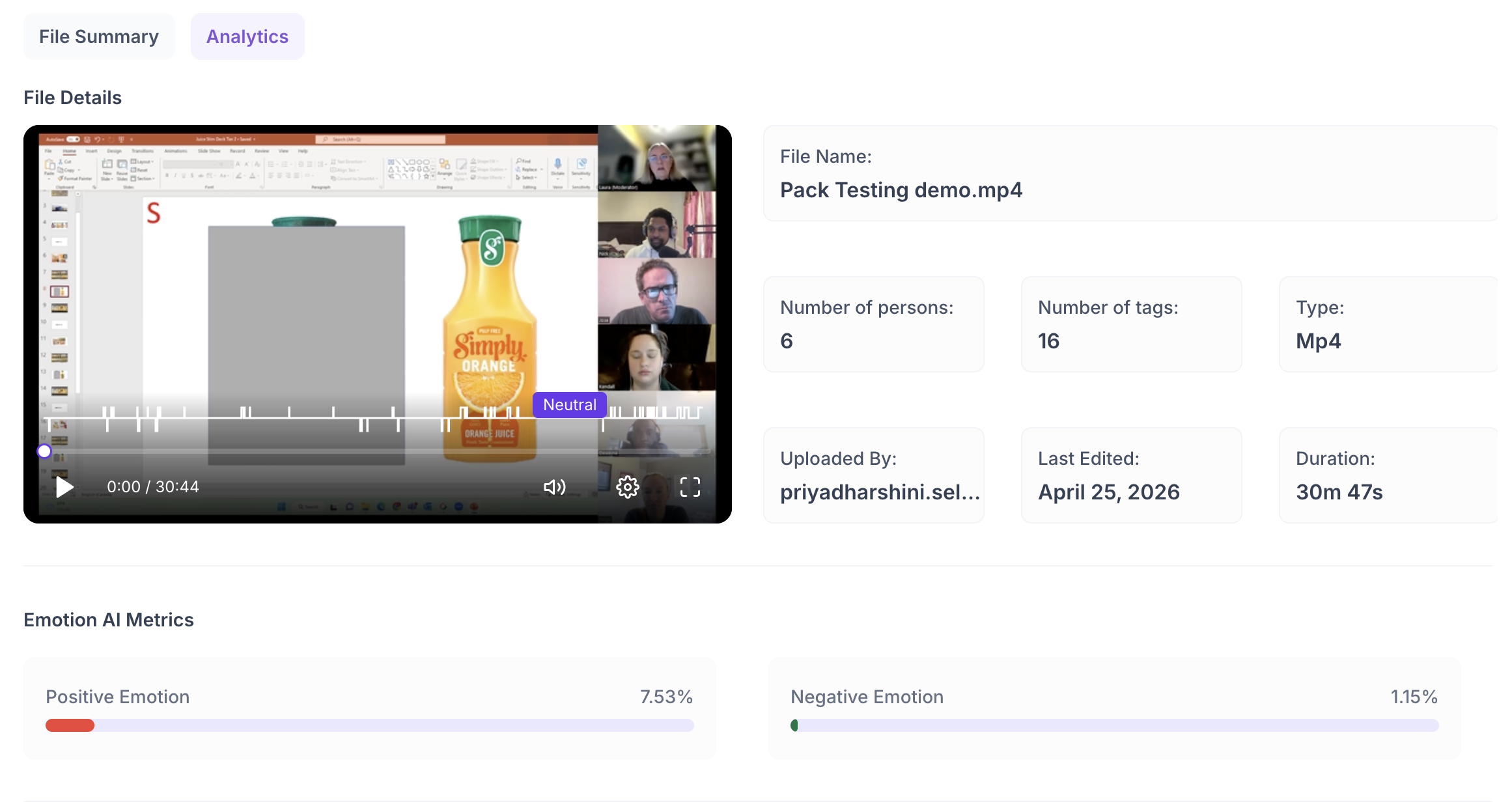

File Details

Shows the key metadata for the recording: File name, number of persons detected, number of tags applied, file type, who uploaded it, when it was last edited, and the total duration. Use this to confirm you are reviewing the correct file and to check how much tagging has been done on it so far.

Emotion AI Metrics

Shows the Positive and Negative emotion percentages for the session as progress bars. Positive emotion reflects moments where the participant displayed positive facial expressions. Negative emotion reflects moments of frustration, confusion, or displeasure. Use these percentages as a starting point before going into the Emotion Phase Metrics to understand when these emotions occurred during the session.

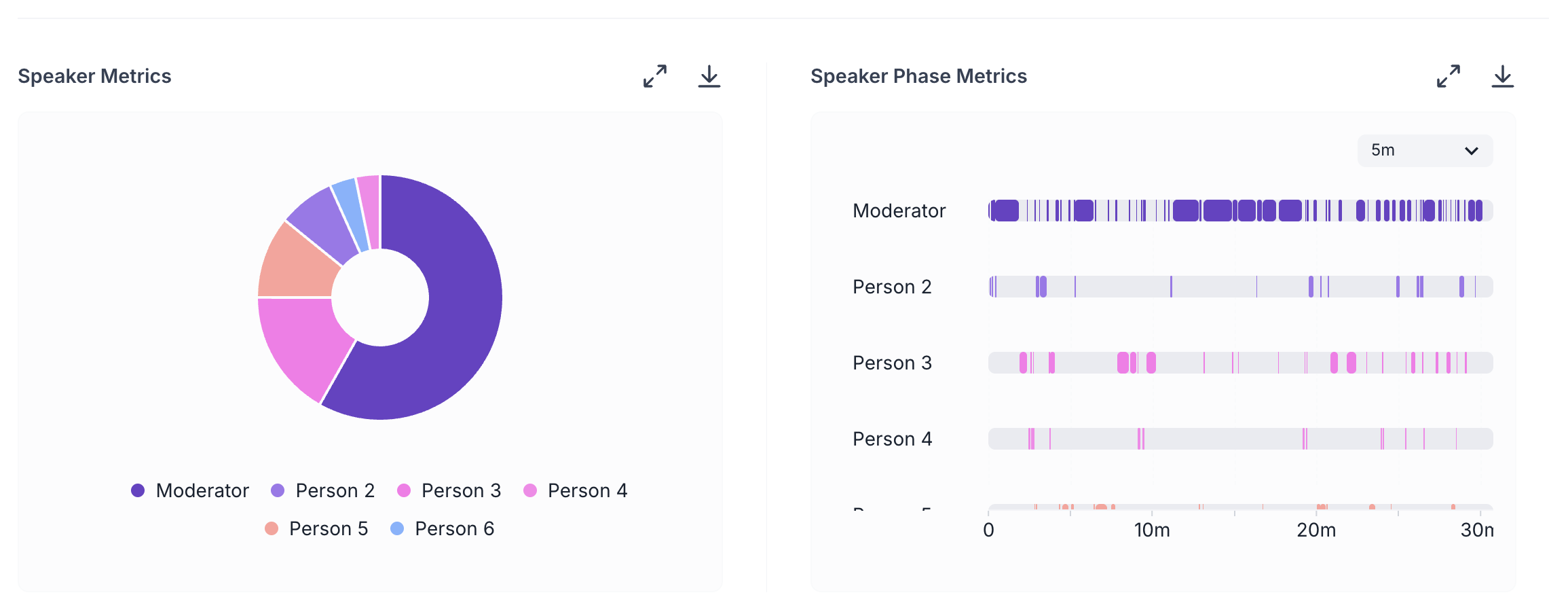

Speaker Metrics

A chart showing the proportion of speaking time attributed to each person in the session. Each speaker is shown as a separate colour-coded segment. In a well-run session, participants typically speak more than the moderator. Use this chart to check the balance of speaking time. If the moderator's share is significantly higher than the participant's, it may indicate the session was led too heavily, which can affect the quality of participant responses.

Speaker Phase Metrics

A timeline showing when each speaker was active during the session. Each person has their own row, and their speaking moments are marked across the full duration of the recording. You can view this at 1-minute, 2-minute, or 5-minute intervals. Use this to understand the flow of conversation, identify long stretches where only the moderator was speaking, or check whether a participant was silent for an extended period.

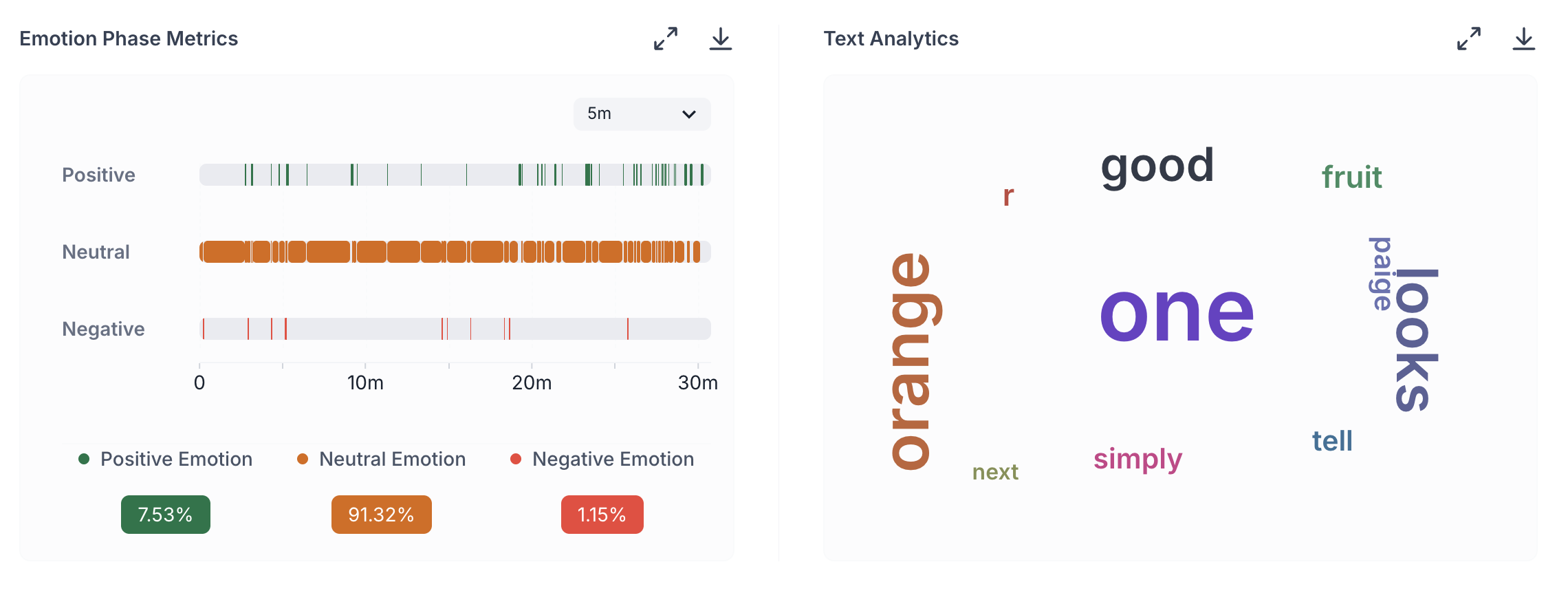

Emotion Phase Metrics

A timeline showing when Positive, Neutral, and Negative emotions occurred throughout the session. Each emotion type has its own row. Switch between 1-minute, 2-minute, or 5-minute intervals depending on how granular you need the view to be. Use this alongside the transcript to connect emotional reactions to specific moments in the conversation. If negative emotion clusters in a particular part of the session, go to the transcript at that timestamp to see what was being discussed.

Text Analytics

A word cloud showing the most frequently used terms across the session transcript. Words that appear larger were used more often. Use this to understand the language participants naturally reach for when discussing the topic. Terms that appear prominently across multiple sessions reflect the vocabulary your users associate with the product or experience, which is useful when writing survey questions, product copy, or discussion guides for follow-up research.

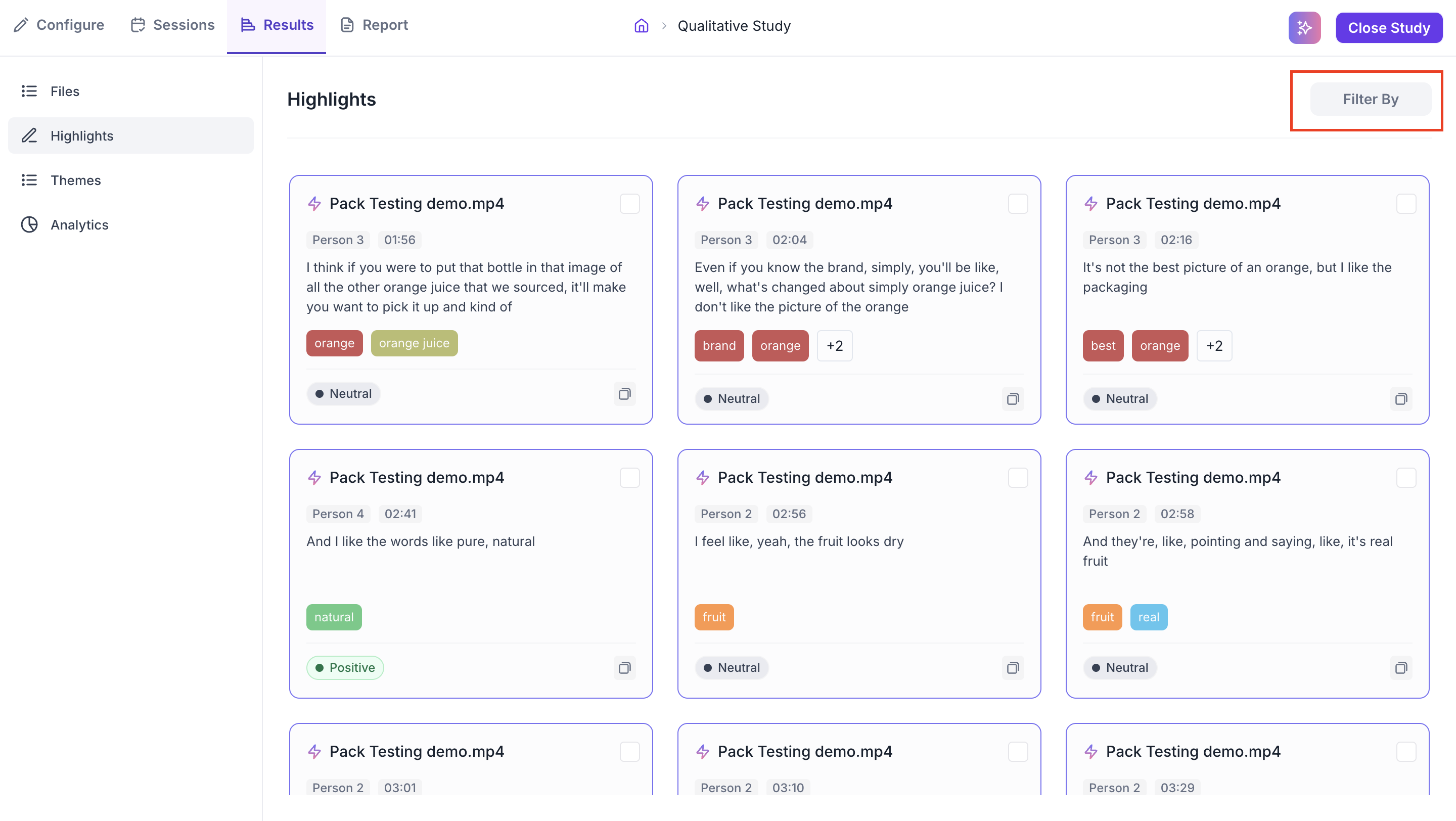

HIGHLIGHTS

The Highlights section collects every tagged moment from every file in the study into a single view. Rather than going back into individual transcripts to find a specific moment, you can see all your tagged evidence in one place and navigate across sessions efficiently.

Each highlight card shows the following.

- File name: Which recording the highlight came from.

- Speaker: Who was speaking at that moment.

- Timestamp: The exact point in the video where the moment occurred.

- Transcript excerpt: What was said.

- Tags: The tags applied to the moment.

- Detected emotion: Whether the emotion at that moment was Positive, Neutral, or Negative, based on facial coding.

Highlights are most useful when you are preparing findings to present to your team or writing a research report. Instead of describing what a participant said from memory, you can point directly to a specific moment with the exact words, the speaker, the timestamp, and the emotional context all in one place.

Use the Filter By dropdown in the top right to narrow highlights to a specific file. This is useful when you want to review all tagged moments from a single session. When you want to look across the full study to see which moments came up across multiple participants, leave the filter unset.

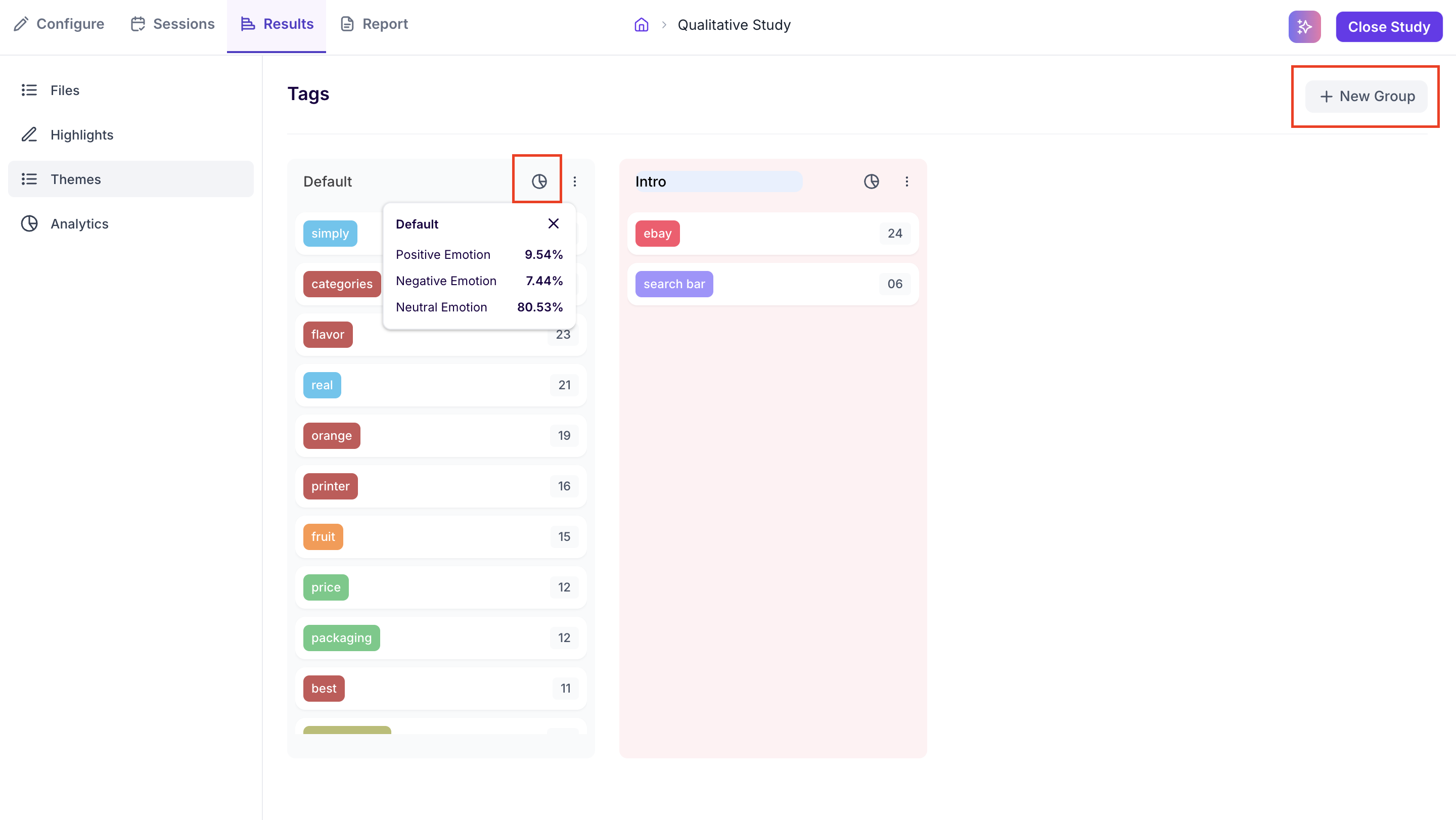

THEMES

The Themes section is where you organise and analyse the tags you have created across all sessions. It shows all tags grouped into tag groups, along with the emotion data associated with each group. This section works best when you have tagged consistently across all files.

Tag Groups

All tags appear under a Default group automatically. You can create additional groups by clicking New Group in the top right. Tag groups are useful for organising tags by topic, research question, or any other category that is meaningful to your study. To move a tag into a different group, drag and drop it from one group to another. This lets you reorganise your tags as your analysis develops without losing any of the underlying highlights.

Each tag row shows the tag name and the number of times it was applied across all sessions. This count gives you a quick sense of how frequently a specific topic came up across the study.

Emotion Breakdown per Group

Hovering over a tag group name reveals the emotion breakdown for that group: the proportion of Positive, Negative, and Neutral emotion across all moments tagged within it. This tells you not just how often a topic came up, but how participants felt when it did.

For example, if you have a tag group for navigation and the emotion breakdown shows a high proportion of Negative emotion, it indicates that participants consistently reacted negatively when navigation topics came up across sessions. This level of insight is only possible when tagging has been applied consistently across all files in the study.

ANALYTICS

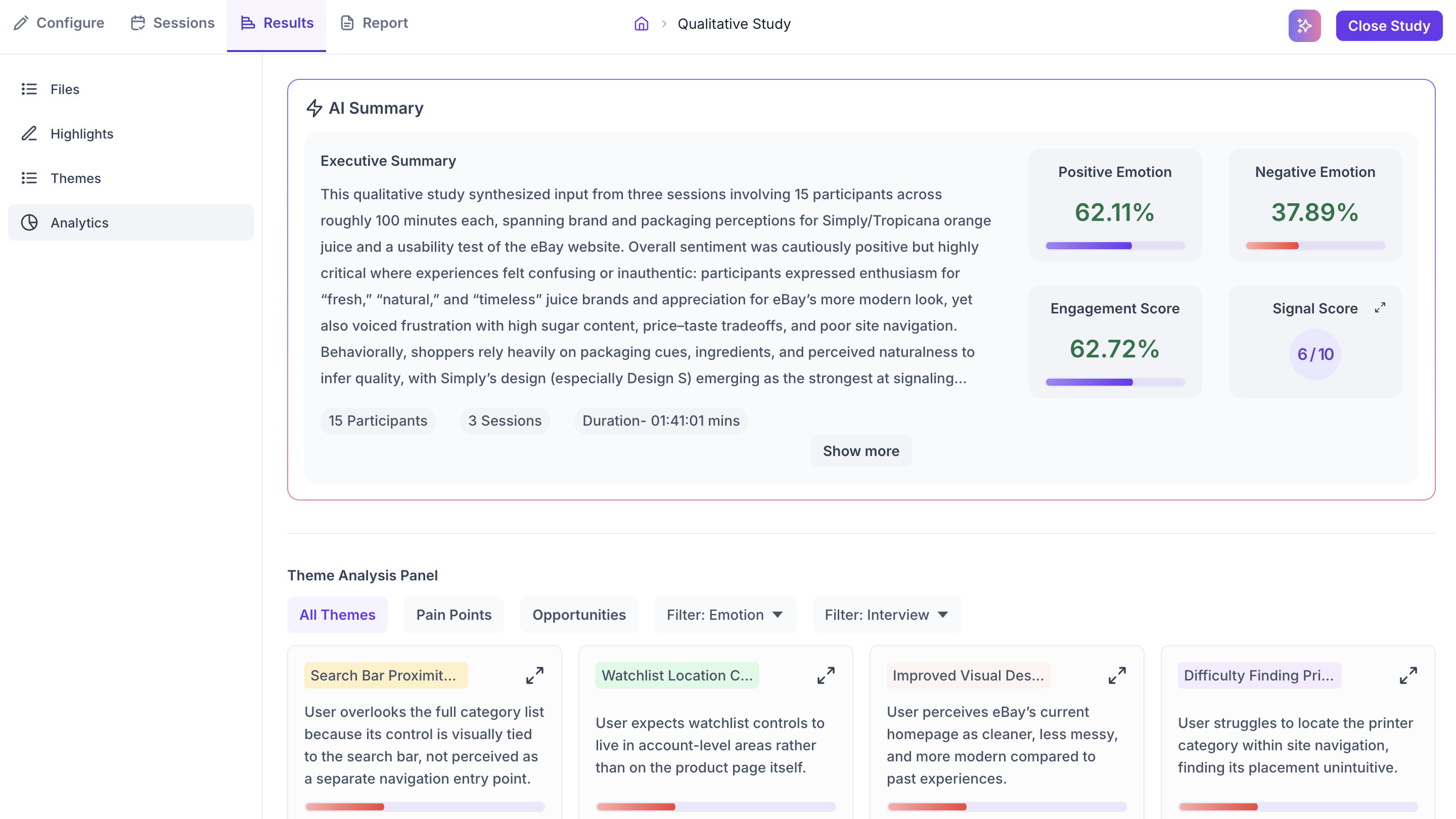

The Analytics section brings together AI-generated insights from across all your sessions. It covers four areas: AI Summary, Theme Analysis Panel, Question-Wise Insights, and Top Opportunity Areas and Strategic Recommendations.

AI Summary

The AI Summary generates an Executive Summary of the entire study. It covers the main topics discussed across all sessions, the overall emotional sentiment, and what the data suggests is working well and what needs attention. The summary also shows the total number of participants, sessions, and combined duration. Use Show More to expand the full summary and Show Less to collapse it.

Four metrics appear alongside the summary.

Positive Emotion %

The percentage of moments across all sessions where participants displayed positive facial expressions. A high positive percentage indicates participants responded well to the topics or experiences being discussed. Use this alongside the Negative Emotion % to understand the overall emotional tone of the study before going into specific themes

Negative Emotion %

The percentage of moments across all sessions where participants displayed negative facial expressions such as confusion, frustration, or displeasure. A high negative percentage signals that certain parts of the sessions triggered strong negative reactions. Use this alongside the Emotion Phase Metrics in the individual file Analytics tab to identify exactly when and where these reactions occurred.

Engagement Score

A measure of how actively participants engaged during the sessions, based on their facial expressions. It reflects the intensity and variety of facial movement captured throughout the recordings. A high score indicates participants were visibly expressive and reactive during the sessions. A low score may mean participants showed minimal facial expression, which can happen due to poor camera quality, insufficient lighting, or sessions that did not prompt strong visible reactions. The score is shown as a percentage.

Signal Score

A composite quality rating out of 10 that tells you how reliable the emotional data from your sessions is overall. Click the expand icon on the Signal Score card to open a detailed breakdown. Three factors each contribute to the overall score.

- Facial Signal: Reflects the quality of the facial data captured. Affected by camera resolution, lighting conditions, whether the participant's face was fully visible, and how much they moved during the session.

- Voice Signal: Reflects the clarity of the audio. Poor audio quality such as background noise, low microphone volume, or overlapping speech reduces the reliability of voice-based emotional signals.

- Text Clarity: Reflects the accuracy of the auto-generated transcript. Clear speech with minimal crosstalk produces a more accurate transcript, which improves the quality of text-based analysis.

Each factor is rated individually as Low, Fair, Good, Very Good, or Excellent. The overall Signal Score follows the same scale. A score of 6 to 7 is rated Good. A score below 4 is Low, which means the emotional data has significant reliability issues.

A low Signal Score affects the emotion percentages and emotion-related analytics. Transcript-based findings are not affected. If your Signal Score is low, treat emotion data with caution but continue to rely on the transcript and highlights for your findings.

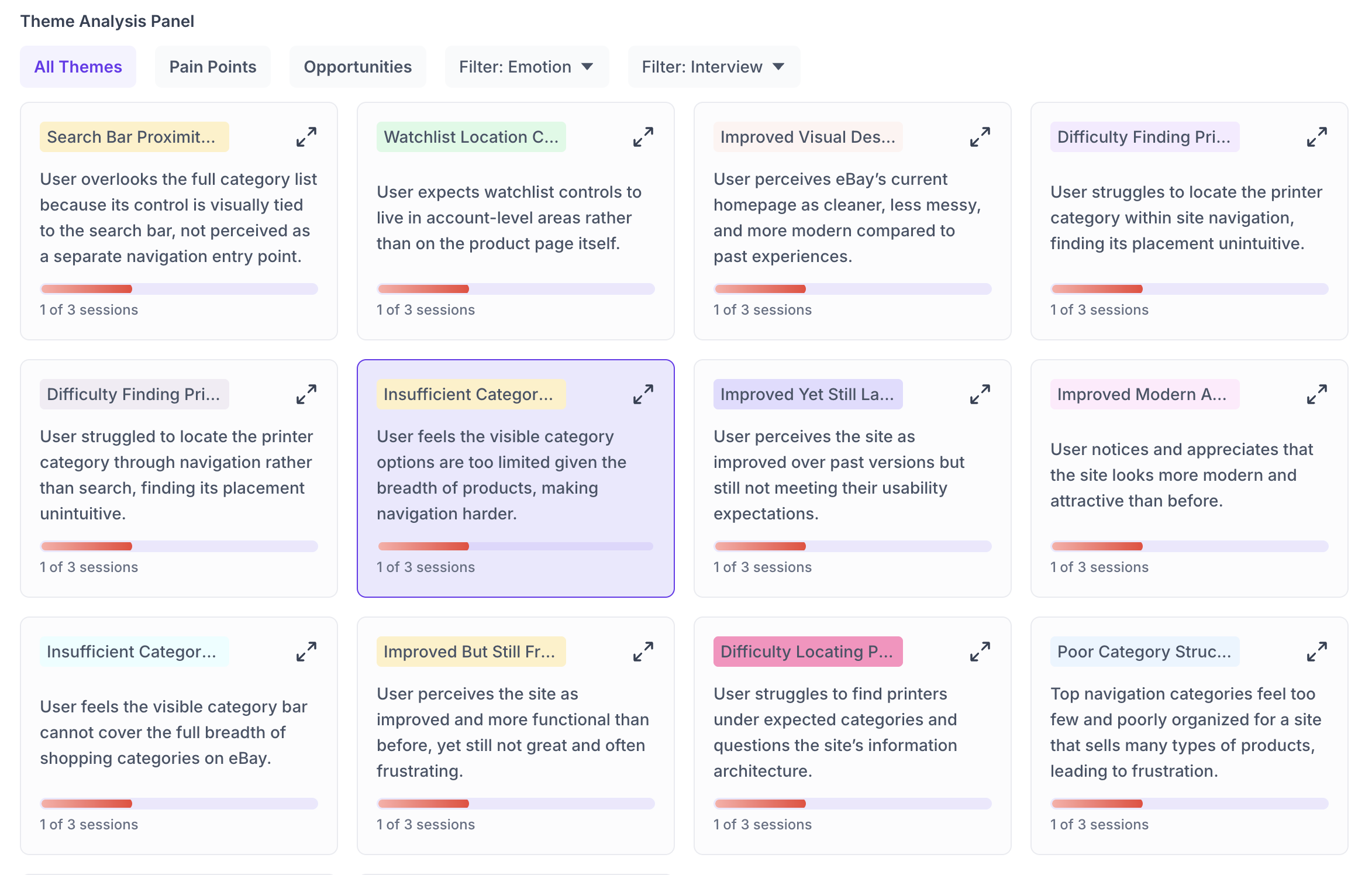

Theme Analysis Panel

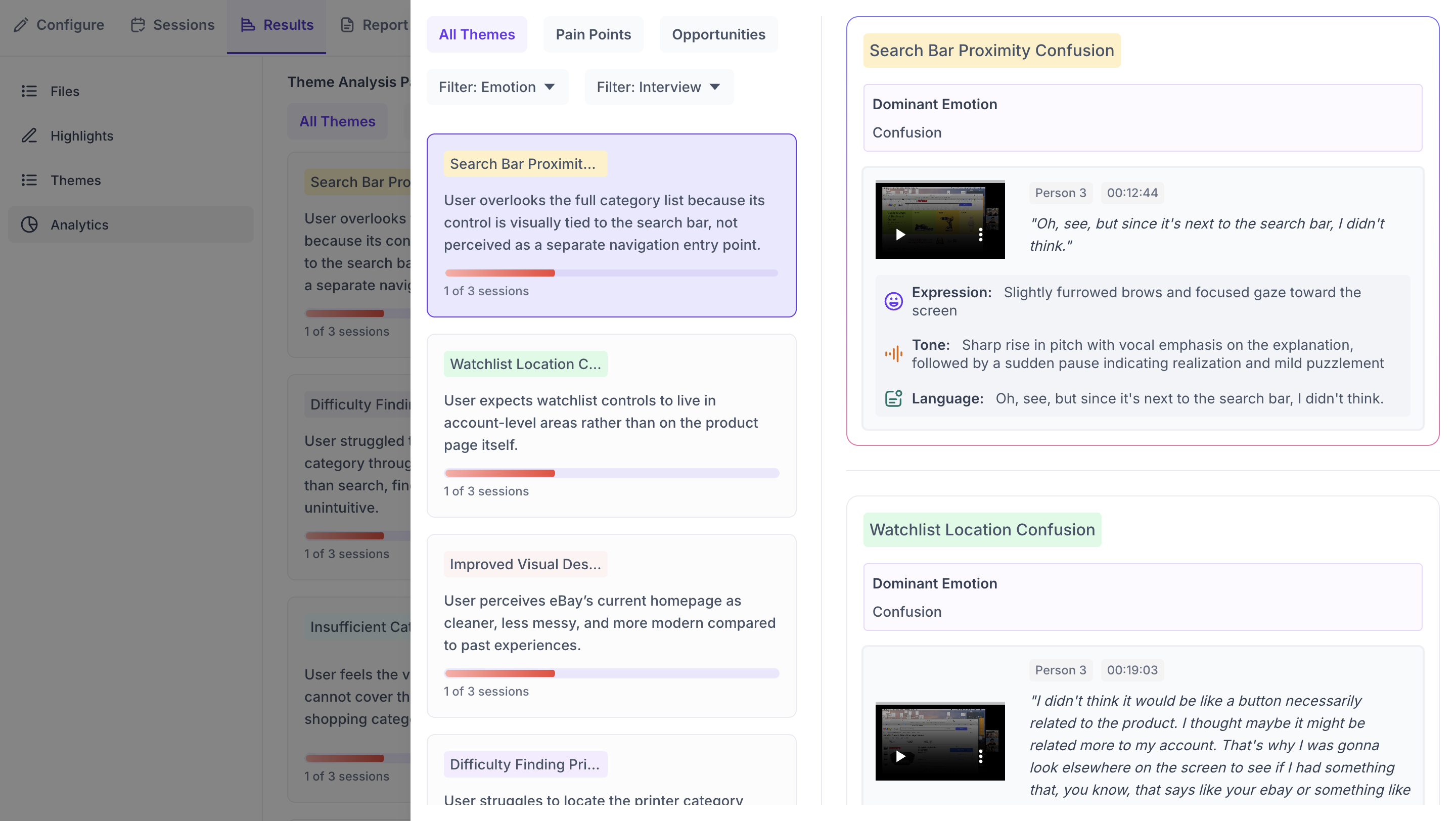

The Theme Analysis Panel shows AI-identified patterns from across all your sessions as individual theme cards. Decode analyses the transcripts and emotional data from every session and groups recurring patterns into named themes automatically. Each theme card shows a theme name, a one-line description of the pattern, the number of sessions it appeared in, and a bar indicating the strength of the emotional signal associated with it.

The session count on each card is the most important indicator when assessing a theme. A theme that appeared in only one session reflects a single participant's experience. A theme that appeared across multiple sessions independently is a recurring pattern. Always check the session count before deciding how much weight to give a theme in your findings.

Use the tabs and filters at the top of the panel to narrow the themes shown.

- All Themes: Shows every theme Decode identified across all sessions.

- Pain Points: Shows only themes that reflect difficulty, frustration, or problems participants encountered.

- Opportunities: Shows themes that suggest areas where the experience worked well or where there is potential for improvement.

- Filter: Emotion: Narrows themes by the dominant emotion detected within them. Use this when you want to find specifically where participants expressed confusion, frustration, or positive reactions.

- Filter: Interview: Narrows themes to a single session. Use this when you want to understand one specific participant's experience in depth.

Click the expand icon on any theme card to open the detailed view. The detail view shows the theme name, the dominant emotion detected, and supporting video clips with timestamps. Each clip shows a breakdown of the participant's Expression, Tone, and Language at that moment, giving you a detailed view of what they were experiencing. The video clip options allow you to view in Full screen, Download, Mute, adjust Playback speed, or switch to Picture-in-picture mode.

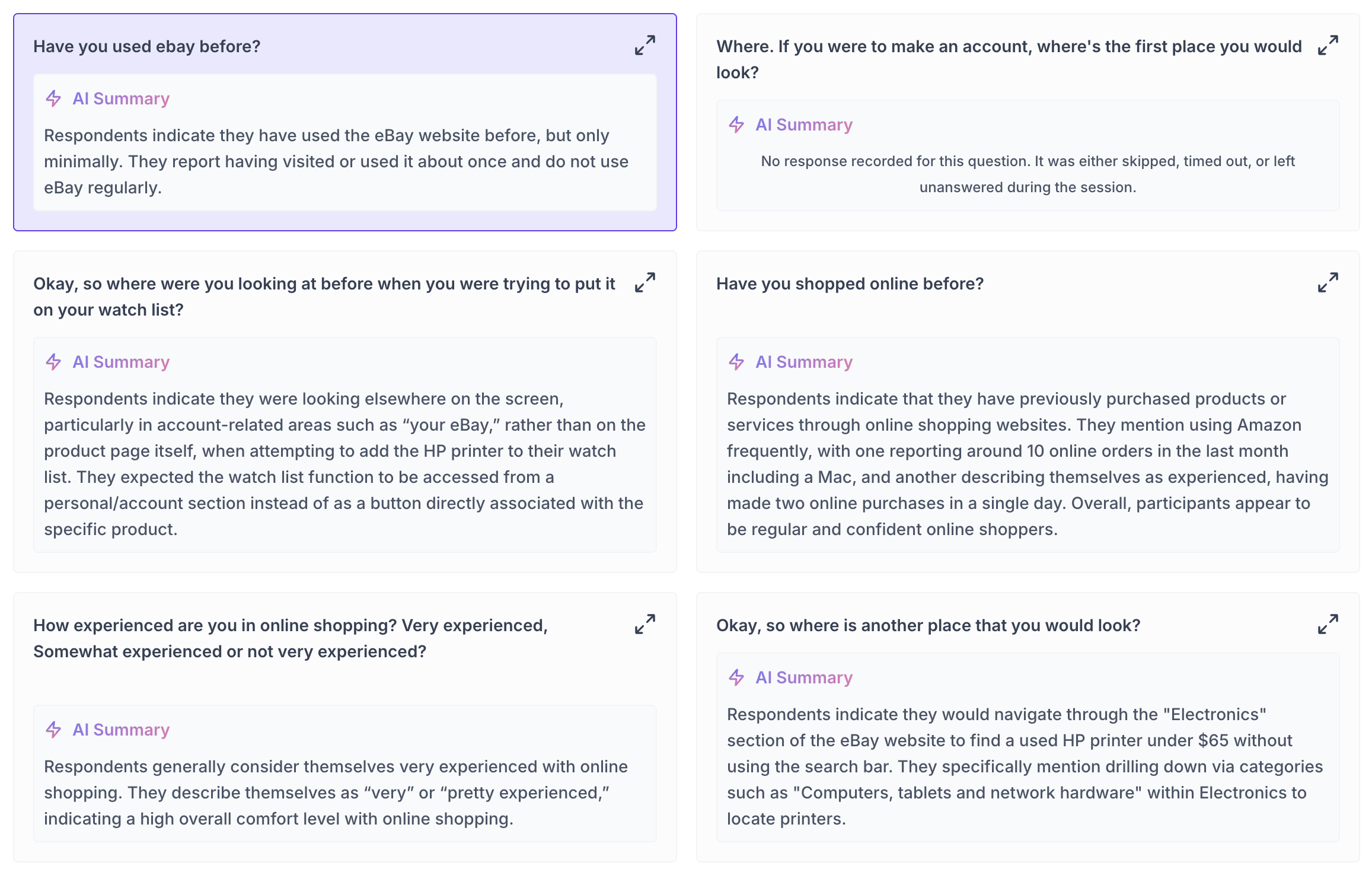

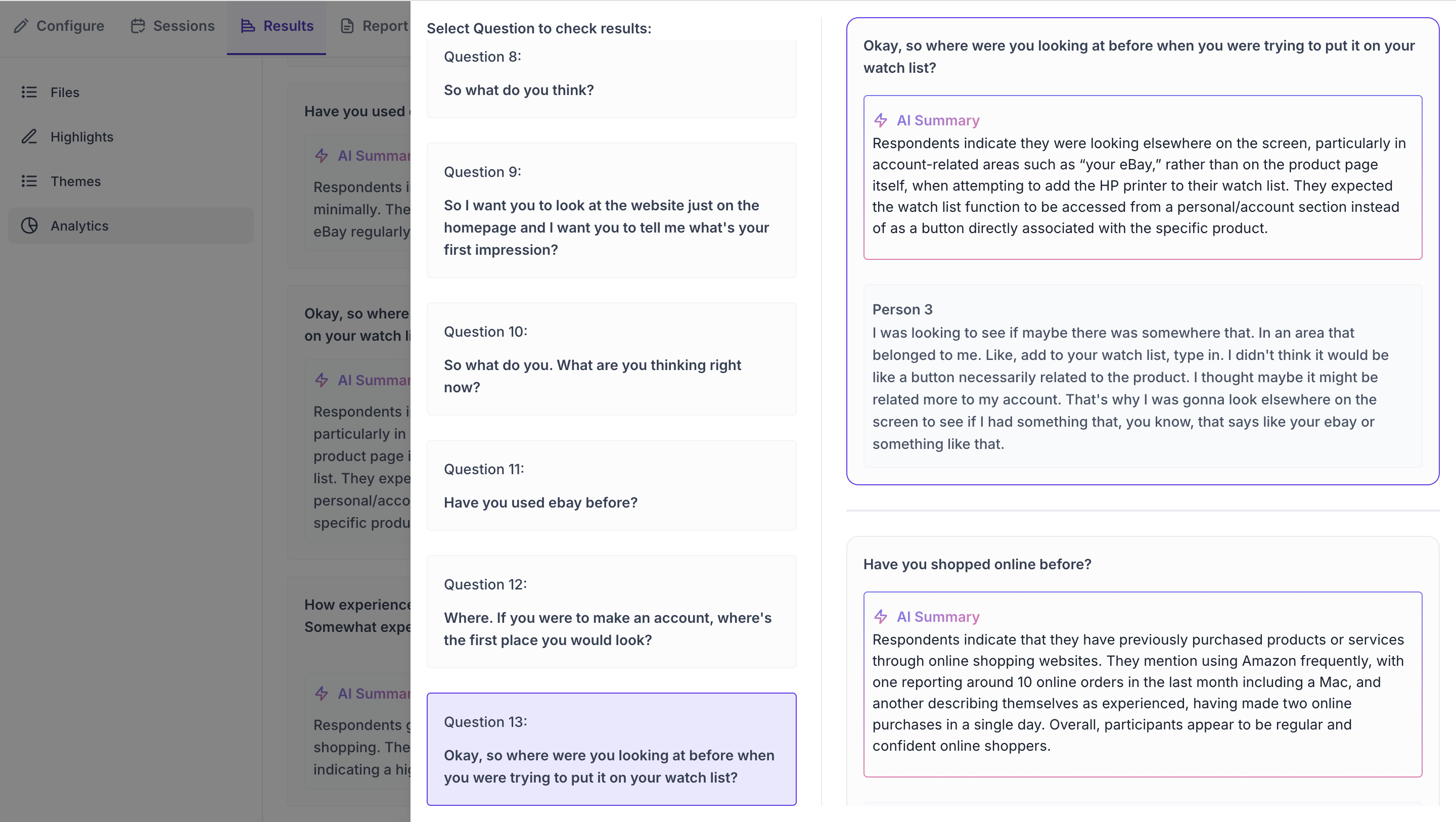

Question-Wise Insights

If your sessions included structured questions asked by the moderator, the Question-Wise Insights section shows how participants responded to each question across all sessions. Each question has its own card in the main view with an AI Summary of the collective responses. If no response was recorded for a question, the card will indicate the question was skipped, timed out, or left unanswered during the session.

Click the expand icon on any question card to open the detailed view. The left panel shows the full list of questions from the session. Click any question on the left to see its results on the right. The right panel shows the AI Summary for that question at the top, followed by the full verbatim response from each participant. Read the summary first to understand the overall pattern, then read the individual responses to see the full range of what was said across participants.

Top Opportunity Areas and Strategic Recommendations

At the bottom of the Analytics section, Decode generates two AI-powered outputs based on everything collected across all sessions.

- Top Opportunity Areas: A list of the areas where participant feedback points to the greatest potential for improvement. Each opportunity area is drawn from patterns identified across themes, pain points, and participant responses throughout the study.

- Strategic Recommendations: A list of specific, actionable suggestions for how the team could respond to the opportunity areas. These are generated by Decode based on the full body of evidence from the study and are intended to give the team a starting point for planning next step.